- Home

- Services

- About

- News

- Contact

- Jaikoz manual pdf

- Xamarin for visual studio 2015 torrent

- Codename eagle soundtrack

- M-audio fast track ultra 8

- Semisonic closing time official music video

- Backup exec 2010 jobs not running

- Muddy waters electric mud zip

- Windows 10 1809 iso file download

- Ted williams outboard motor impeller

- Adobe audition cs6 won t play

- Skyrim romance mod 2-0

- 2013 dodge service def system see dealer

- Trimble business center tutorials pdf

- Skyrim pc game consol

- 2pac dear mama mp3 download free

- Bricscad mtext

- Nada sms suara tokek

- Movie harry potter in hindi

- Falguni pathak gay

- Bad security mode dfs cdma tool cps error

- Vmix 18

- Crazytalk 8 system requirements

- Navicat data modeler dictionary

- Vacron viewer settings

- Cartoon octopus

find() API call into the list() function to have it return a list containing all of the collection’s documents (that matched the query) and their respective data. it should be of type DBObject or convertible to DBObject. I am using PyMongo to simply iterate over a Mongo collection, but I'm struggling with handling large Mongodb date objects.

#Navicat data modeler dictionary generator#

Loop through the letters in the word "banana": for x in "banana": print(x) Most efficient way in Python to iterate over a large file (10GB+) This is not a 5-line answer to your question, but there was an excellent tutorial given at P圜on'08 called Generator Tricks for System Programmers. Viewed 8k times 0 I have been trying to create a function which calls a paginated API, loops though each of the pages and adds a JSON value to an array. In simple words when we call a find Let’s walk through an example to see the different ways to page through data in MongoDB. To understand this example, you should have the knowledge of the following Java programming topics: … Answer: In essence a Collection is a list of dictionaries. On a transactional storage engine like InnoDB, you can expect counts to be accurate to within 4% of the actual number of rows.Mongodb iterate over large collection To allow pipeline processing to take up more space, set the allowDiskUse option to true to enable writing data to temporary files, as in the following example: batch_size can not override MongoDB’s internal limits on the amount of data it will return to the client in a single batch (i.

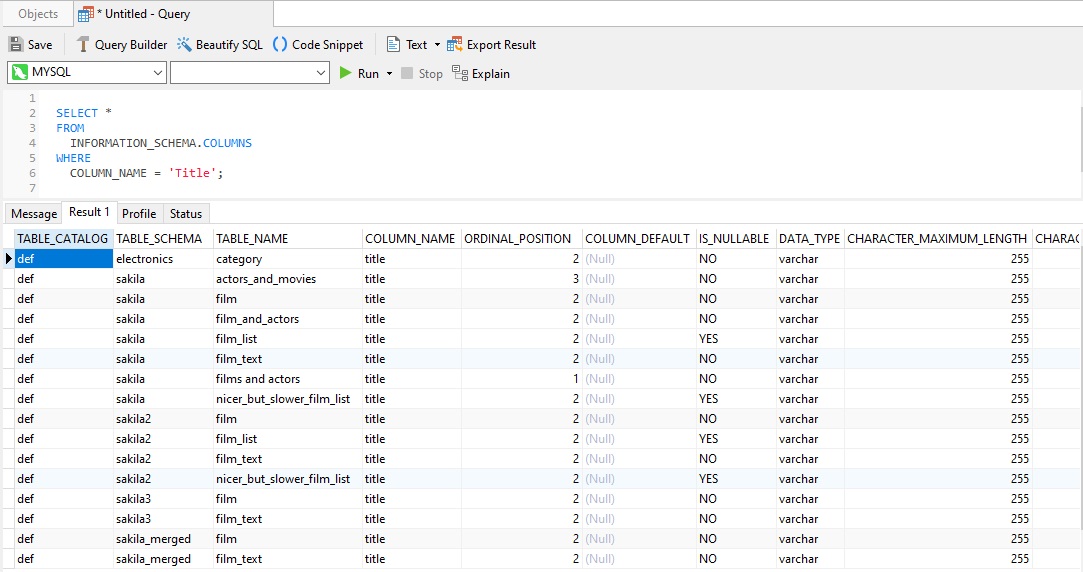

Therefore, the actual count(1) will be dependent on the time your transaction started, and its isolation level. The ramifications of MVCC, a feature that allows concurrent access to rows, are that, at any one point in time, there will be multiple versions of a row. Rather, transactional storage engines sample a number of random pages in the table, and then estimate the total rows for the whole table. However, transactional storage engines such as InnoDB do not keep an internal count of rows in a table. These queries will perform very fast and produce extremely exact results on MyISAM tables. +-+-+-+ A Final Word regarding Speed and Accuracy Execute s from execute s deallocate prepare s Our concatenated select statements are saved in the variable so that we can run it as a prepared statement: ('performance_schema', 'mysql', 'information_schema') Build a "select count(1) from db.tablename" per table Aggregate rows into a single string connected by unions Within the statement, the group_concat() function packs multiple rows into a single string in order to turn a list of table names into a string of many counts connected by unions. For that, we have to employ a prepared statement. Obtaining a row count for all databases within a schema takes a little more effort. Just add a WHERE clause with the condition that the table_schema column matches your database name: It’s easy enough to obtain a row count for one database. By querying it, you can get exact row counts with a single query. The INFORMATION_SCHEMA “TABLES” table provides information about…what else…tables in your databases. Also sometimes referred to as the data dictionary and system catalog, it's the ideal place to lookup information about databases, tables, the data type of a column, or access privileges. The INFORMATION_SCHEMA database is where each MySQL instance stores information about all the other databases that the MySQL server maintains. This would be tedious and likely require external scripting if you planed on running it more than once. You don’t have to run a count query against every table to get the number of rows.

#Navicat data modeler dictionary how to#

In today’s final third instalment, we’ll learn how to obtain row counts from all of the tables within a database or entire schema. In last week’s Getting Advanced Row Counts in MySQL (Part 2) blog we employed the native COUNT() function to tally unique values as well as those which satisfy a condition.

- Home

- Services

- About

- News

- Contact

- Jaikoz manual pdf

- Xamarin for visual studio 2015 torrent

- Codename eagle soundtrack

- M-audio fast track ultra 8

- Semisonic closing time official music video

- Backup exec 2010 jobs not running

- Muddy waters electric mud zip

- Windows 10 1809 iso file download

- Ted williams outboard motor impeller

- Adobe audition cs6 won t play

- Skyrim romance mod 2-0

- 2013 dodge service def system see dealer

- Trimble business center tutorials pdf

- Skyrim pc game consol

- 2pac dear mama mp3 download free

- Bricscad mtext

- Nada sms suara tokek

- Movie harry potter in hindi

- Falguni pathak gay

- Bad security mode dfs cdma tool cps error

- Vmix 18

- Crazytalk 8 system requirements

- Navicat data modeler dictionary

- Vacron viewer settings

- Cartoon octopus